Imagine partnering with an AI solution crafted to fit your organization’s unique rhythm, one that speaks your industry’s language, anticipates your challenges, and streamlines your processes from day one.

In sectors where precision is paramount, like healthcare compliance or millisecond fraud detection, off-the-shelf tools often miss the mark.

Custom AI delivers the tailored insights and seamless integration your team needs to excel.

Core Principles of Custom AI Solutions

Custom AI stands out by combining domain-specific fine-tuning, proprietary data utilization, human-in-the-loop validation, and contextual understanding.

These principles ensure models are aligned with industry terminology, trained on curated datasets, iteratively reviewed to reduce bias, and equipped to capture complex relationships.

Key takeaways:

- Fine-tune models on your industry data

- Involve experts in the validation process

- Leverage semantic techniques for deeper insights

Comparing Custom and Off-the-Shelf AI

Off-the-Shelf AI solutions offer rapid deployment using pre-trained, generic models that require minimal setup. However, they often lack the precision and contextual relevance needed for specialized workflows.

Custom AI solutions, by contrast, train on proprietary data and integrate deeply into organizational processes, delivering higher accuracy and adaptability.

Though they require greater upfront investment for fine-tuning and integration, the long-term benefits include improved operational efficiency and scalability.

| Feature | Off-the-Shelf AI | Custom AI |

| Data Source | Public/generic | Proprietary, domain-specific |

| Deployment Speed | Very fast | Requires fine-tuning |

| Workflow Integration | Limited | Seamless |

| Adaptability | Low | High |

| Upfront Investment | Low | Higher with long-term ROI |

The Custom AI Development Process

A structured approach ensures that custom AI projects stay on track and deliver results.

- Problem Definition: Engage stakeholders to identify pain points and define measurable outcomes. A logistics provider, for example, may seek to optimize delivery routes based on real-time traffic and historical patterns.

- Data Preparation: Gather, clean and augment datasets. Techniques such as synthetic data generation address edge cases—Tesla’s autonomous driving team, for instance, used simulated scenarios to improve performance in rare conditions.

- Model Training and Testing: Employ hyperparameter tuning (grid search, cross-validation) to balance accuracy, latency and generalization. Evaluate models not only on predictive metrics but also on operational criteria like response time and scalability.

- Integration: Design middleware or API orchestration layers that translate between AI models and legacy systems. Robust testing ensures data consistency and performance under real-world loads.

Requirements Gathering and Analysis

Accurate requirements prevent costly rework and align AI solutions with real-world workflows.

Methods:

- Stakeholder Interviews to capture business goals and pain points

- Process Mining to derive workflow maps from system logs

- Regulatory Review to embed compliance needs (e.g., HIPAA) early

Benefits:

- Reveals hidden inefficiencies and workflow variations

- Defines precise, data-driven requirements

- Minimizes redesign costs by addressing constraints upfront

Model Training, Testing and Iteration

Effective model performance depends on both precise hyperparameter tuning and validation against real-world conditions. By calibrating parameters like learning rate, regularization strength and batch size, you strike the right balance between accuracy and efficiency.

Testing should then use domain-specific benchmarks and respect operational constraints. For example, a fraud-detection model might accept a minor accuracy trade-off to guarantee sub-second response times.

Continuous cycles of training, evaluation and expert review ensure the AI stays aligned with evolving business needs.

Integration with Existing Systems

Peak model performance relies on precise hyperparameter tuning and real-world validation. By adjusting knobs like learning rate, regularization strength, and batch size, you strike the ideal balance between accuracy and speed.

Your testing suite should then challenge the model with domain-specific benchmarks and operational constraints, for example, a fraud-detection engine might willingly trade a fraction of a percent in accuracy to guarantee sub-second response times.

Finally, rapid cycles of retraining, evaluation, and expert feedback keep the AI aligned with shifting data patterns and business priorities.

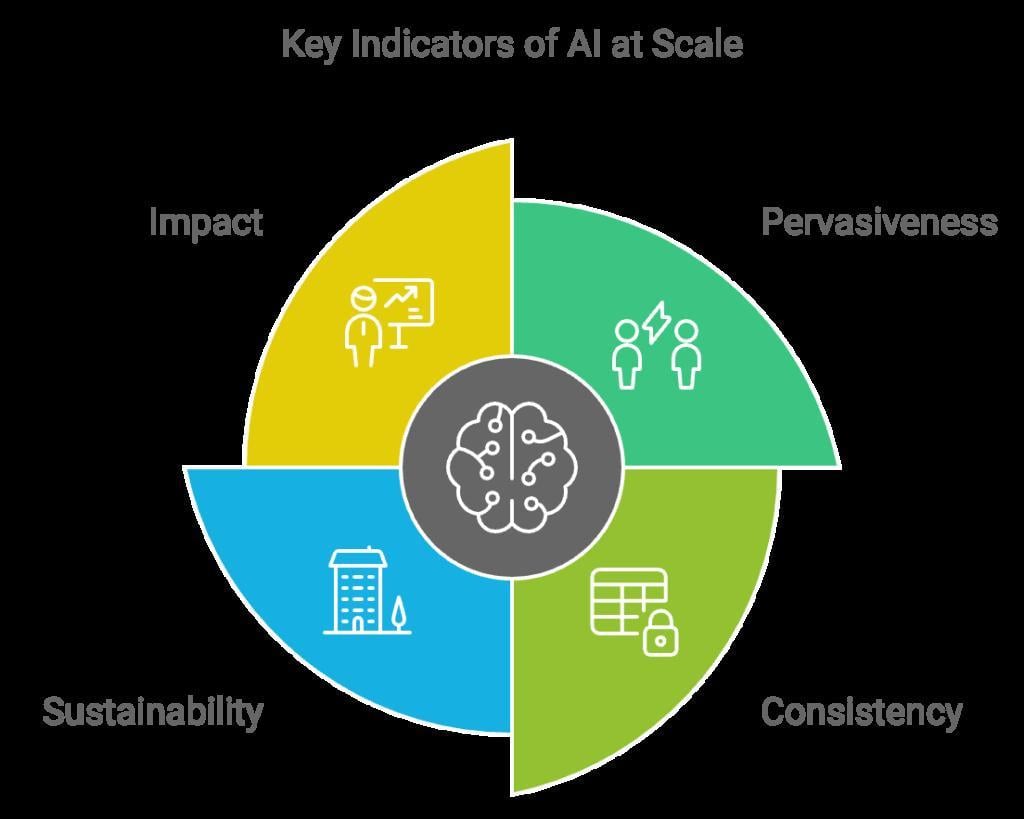

Business Value of Custom AI

Tailored AI systems deliver higher operational efficiency by automating repetitive tasks, optimizing resource allocation and reducing human error. Modern fine-tuning platforms lower barriers to entry, making custom AI viable for a wider range of organizations.

Proprietary data fine-tuning empowers competitive differentiation: a logistics firm can outperform rivals by training on its own delivery patterns, while a retailer can boost sales through hyper-targeted demand forecasting.

Enhancing Operational Efficiency

Process mining combined with custom AI reveals hidden bottlenecks and dynamically optimizes workflows. By analyzing event logs in real time, businesses can preempt delays and continually refine processes.

This adaptive approach drives sustained efficiency gains beyond what static process maps allow.

Achieving Competitive Differentiation

Domain-specific fine-tuning turns unique operational data into actionable insights. In finance, proprietary transaction histories enable fraud detection models to adapt to subtle, evolving patterns.

In retail, regional sales data yields personalized recommendation engines that more accurately predict consumer behavior. Custom AI evolves with the business, maintaining relevance as markets shift.

Scalability:

Utilizes modular microservices and hybrid cloud/on-premise strategies to seamlessly handle increasing data volumes, optimize resource utilization, and control costs as your operations expand.

Technical Considerations in Custom AI

Successful custom AI depends on robust data pipelines, scalable architectures, and transparent decision-making.

- Data Quality and Preparation: A majority of project effort goes into cleaning and structuring data. Poorly formatted or biased inputs can skew results and undermine trust. Robust governance and diverse datasets are essential to reliable performance.

- Modular, Scalable Architecture: Segmenting AI systems into independent layers (data ingestion, model training, deployment) enables targeted updates and scaling without full system overhauls. Hybrid cloud/on-premise strategies balance cost and flexibility.

- Explainability and Compliance: Transparent AI frameworks break down model decisions into human-understandable explanations. This is vital in regulated industries, where auditability and accountability are non-negotiable.

Data Privacy and Compliance

Building privacy from day one is non-negotiable.

- Privacy-enhancing technologies such as differential privacy and homomorphic encryption safeguard sensitive information.

- Controlled noise addition prevents re-identification attacks

- Encrypted computation enables safe processing of protected data.

Embedding these techniques from the design phase ensures robust compliance with regulations like HIPAA and GDPR without sacrificing model utility.

Scalability and Future-Proofing

Future-ready AI systems rely on modular designs, automated retraining pipelines, and hybrid infrastructure strategies.

Top 3 tips:

- Isolate data ingestion, training, and deployment layers

- Implement CI/CD pipelines for continuous updates

- Blend on-premise and cloud resources to control costs

FAQ

What are the key benefits of partnering with a custom AI development company?

Tailored solutions align with unique workflows, leveraging entity relationships to structure data, salience analysis to prioritize critical information and co-occurrence optimization to uncover meaningful patterns. This approach delivers operational efficiency, seamless system integration and long-term adaptability.

How does salience analysis improve model relevance and accuracy?

By identifying and weighting high-value data points, salience analysis reduces noise and focuses the AI on information that drives decision-making. Combined with structured entity relationships, it refines contextual understanding, yielding more precise outputs.

What role do entity relationships play in data structuring?

Entity relationships map connections between key concepts, organizing data into meaningful networks. This enhances the model’s ability to retrieve and process relevant information, enabling context-aware insights tailored to business objectives.

How does co-occurrence optimization enhance performance?

By analyzing patterns of term co-occurrence, this technique infers deeper semantic relationships within datasets. When integrated with entity structures and salience weighting, it enables highly accurate, domain-specific outputs.

What factors should businesses consider when selecting a custom AI partner?

Evaluate the provider’s domain expertise, data structuring capabilities, governance practices and track record of delivering scalable, secure and compliant solutions. Transparent communication, collaborative methodologies and ongoing support are essential for lasting success.

Conclusion

Custom AI is more than a technological investment, it’s a strategic partnership that evolves with your organization’s unique needs. By tailoring solutions to your data, workflows, and compliance requirements, you unlock precision, efficiency, and competitive advantage.

Whether you’re aiming to streamline operations, differentiate in the marketplace, or future-proof your systems, custom AI offers a scalable, resilient path forward.

Call to Action

Ready to transform your business with custom AI? Sign up now and build your own Custom AI Solution.

Build a custom AI development company that works for you!

Automate tasks, boost performance, and stay ahead with custom AI.

Trusted by thousands of organizations worldwide