Integrating CustomGPT.ai with Shell programming language can provide exciting possibilities for command-line application development. Shell scripting, known for its simplicity and efficiency in automating tasks, now receives a boost with the integration of CustomGPT.ai’s RAG API, central to how CustomGPT.ai works. In this article we will explore the practical aspects of using Shell programming to interact with CustomGPT.ai, demonstrating how developers can effortlessly create chatbot applications. By leveraging pre-built code snippets provided by CustomGPT.ai, users can seamlessly integrate AI capabilities into their command-line applications.

Let’s see how this integration enhances the functionality of command-line tools.

Intro to Shell and its key features

Shell programming language, a cornerstone of command-line interfaces, offers developers a versatile toolset for automating tasks and interacting with operating systems. With its simple syntax and powerful capabilities, Shell enables users to execute commands, manipulate files, and manage system resources efficiently. Its primary purpose lies in providing a streamlined interface for users to interact with the underlying operating system, making it an indispensable tool for software development.

Key features of Shell programming include:

- Shell scripts allow users to write sequences of commands that can be executed automatically, enabling the automation of repetitive tasks.

- Shell provides a mechanism for users to execute system commands directly from the command line, making it easy to interact with the operating system.

- Shell allows users to manage running processes enhancing system control and resource management.

Moreover, the Shell programming language boasts extensive support for integrating with external RAG APIs, enabling developers to incorporate third-party services and resources seamlessly into their Shell scripts.

CustomGPT.ai

Now, let’s explore how CustomGPT.ai complements Shell programming through its RAG API integration:

- CustomGPT.ai offers a comprehensive RAG API that allows developers to interact with its AI models programmatically, enabling the integration of custom chatbots into Shell scripts.

- With CustomGPT.ai’s RAG API, developers can easily access features such as text generation, analysis, and language translation, enhancing the functionality of their Shell scripts.

- Integrating CustomGPT.ai with Shell is straightforward, because of its well-documented RAG API and support for various programming languages. Developers can quickly integrate CustomGPT.ai into their Shell scripts using simple HTTP requests or SDKs available for popular programming languages.

- By integrating CustomGPT.ai with Shell, developers can create intelligent command-line tools that can leverage AI chatbots to automate tasks, generate text, and provide valuable insights, enhancing productivity and efficiency in various applications.

Integrating CustomGPT.ai with Shell: A Practical Example

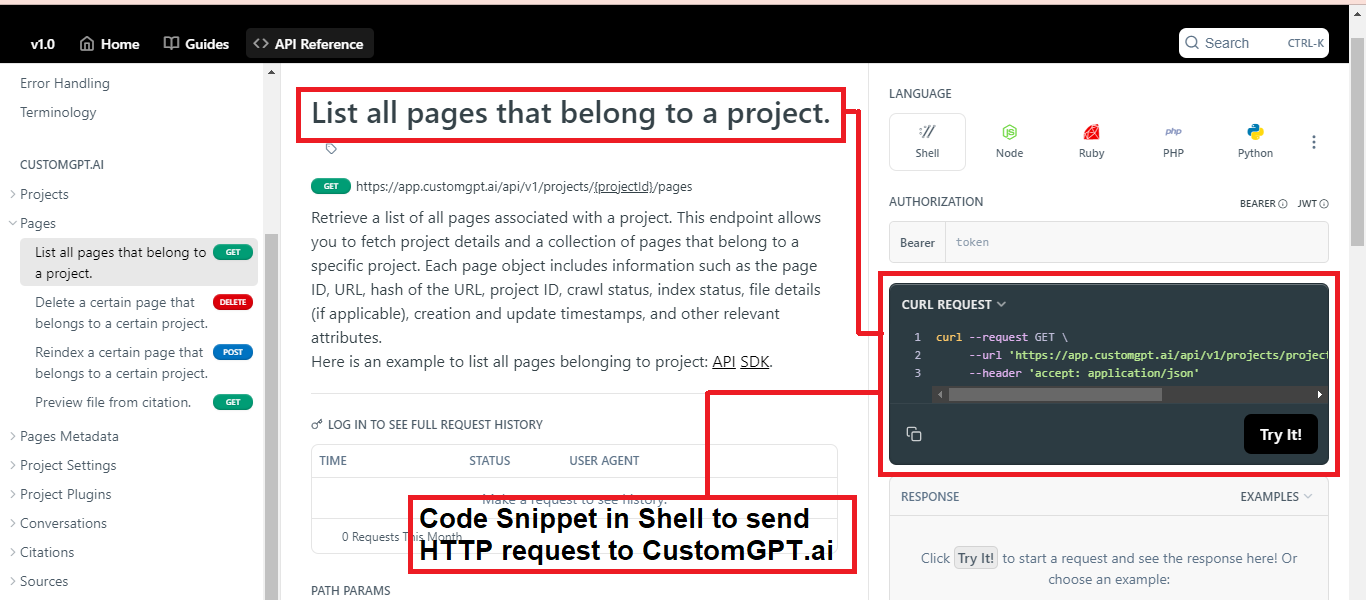

In this example, we’ll explore how to leverage a pre-built shell code snippet to list all pages associated with a project within CustomGPT.ai. By utilizing this snippet, developers can easily retrieve project details along with a collection of pages specific to their project. Each page object contains essential information such as page ID, URL, project ID, crawl status, index status, and creation/update timestamps, among other relevant attributes.

In this example, we’re using a simple command-line tool called ‘curl’ to interact with CustomGPT.ai’s RAG API. The provided code snippet makes an HTTP GET request to CustomGPT.ai’s RAG API endpoint to fetch information about the pages belonging to a specific project.

Here’s what each part of the code does:

- ‘curl’: This is the command-line tool we’re using to make the HTTP request.

- ‘request GET’: This tells ‘curl’ to make a GET request, which is used for retrieving data from a server.

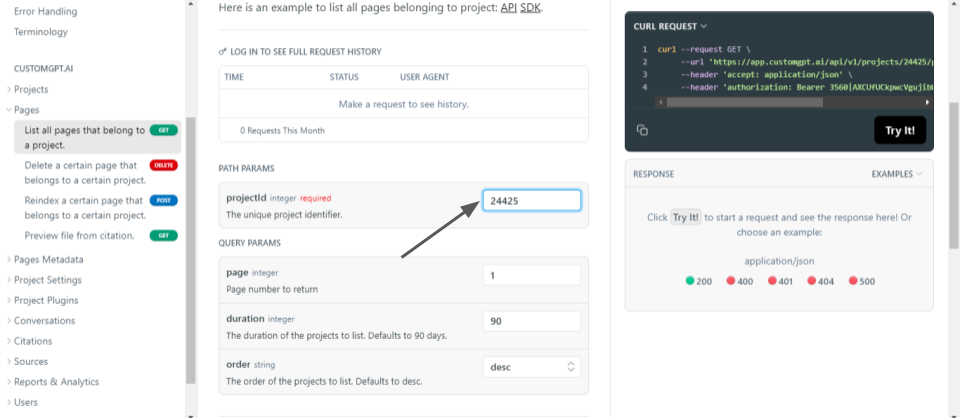

- ‘url’: Specifies the URL of the RAG API endpoint we’re querying. It includes parameters like the project ID, page number, duration, and order to specify what data we want to retrieve.

- ‘header’: Sets the ‘accept’ header to indicate that we want the response to be in JSON format, which is a commonly used data format for RAG APIs.

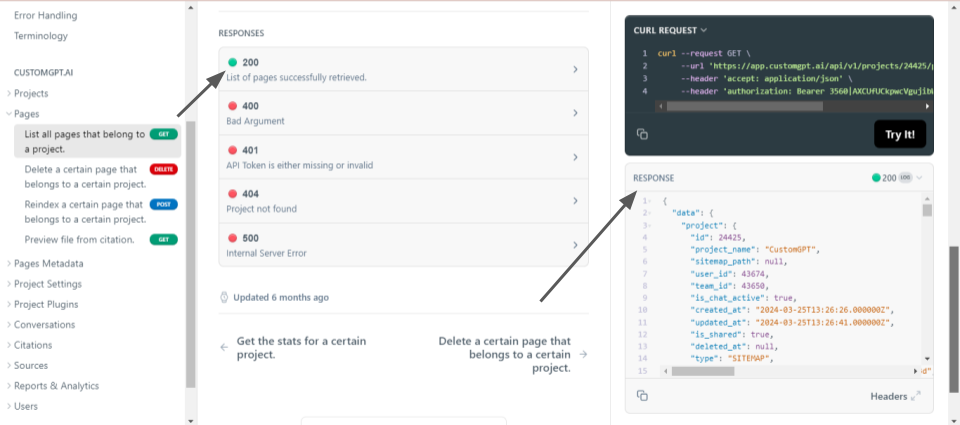

When we run this command in the terminal, CustomGPT.ai will respond with a JSON-formatted list of pages associated with the specified project, including details like page ID, URL, crawl status, and creation/update timestamps.

Test and Run the Code in the CustomGPT.ai Browser

To test and run the code snippet in the CustomGPT.ai browser, follow these steps:

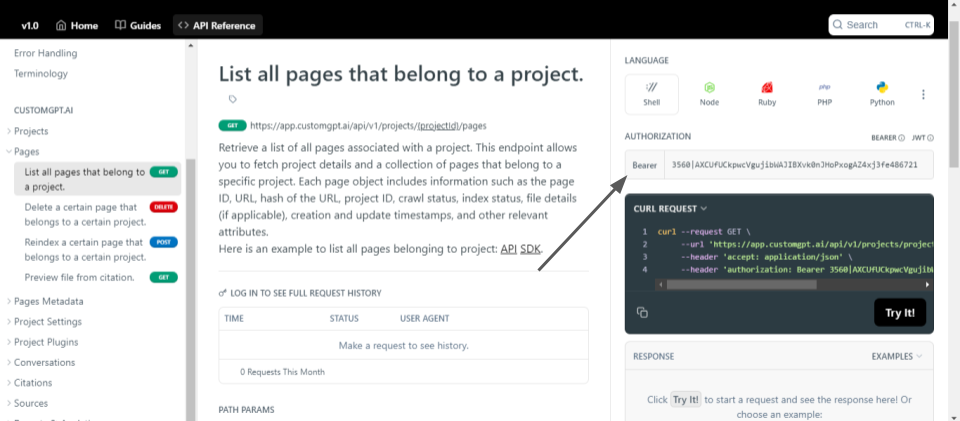

- Log in to your account, and navigate to your profile settings. Here, you’ll find an option to generate an RAG API key. Click on this option to generate your API key. Copy the generated API key as it will be required to authenticate your RAG API requests.

- In the CustomGPT.ai RAG API browser interface, locate the authorization box. Paste the RAG API key you obtained in the previous step into this box. This step ensures that your requests are authorized to interact with the CustomGPT.ai RAG API.

- Identify the project ID for which you want to list the pages. You can find this information within your CustomGPT.ai project settings.

- Replace the ‘projectId’ placeholder in the provided code snippet with the actual project ID you obtained from your CustomGPT.ai project settings.

- Execute the code snippet in the CustomGPT.ai browser interface by clicking on the “Try it” button.

- The response from CustomGPT.ai will be displayed in the browser interface, showing the list of pages associated with the specified project in JSON format. You can review this response to analyze the page details retrieved from the RAG API.

Conclusion

In conclusion, the integration of CustomGPT.ai with the Shell programming language offers developers a streamlined approach to infuse command-line applications with CustomGPT.ai’s functionalities. By leveraging CustomGPT.ai’s RAG API alongside pre-built shell code snippets, developers can embed chatbot capabilities, enhancing user interactions and workflow efficiency. With the user-friendly integration process and the versatility of Shell programming, developers can craft intelligent applications tailored to diverse needs.

Frequently Asked Questions

How do I call a RAG API from Bash without adding a full SDK?

Bill French, Technology Strategist, said, u0022They’ve officially cracked the sub-second barrier, a breakthrough that fundamentally changes the user experience from merely ‘interactive’ to ‘instantaneous’.u0022 For a Bash workflow, you can keep the integration lightweight: store your API key securely, send an HTTPS POST to the OpenAI-compatible REST endpoint at /v1/chat/completions with curl, and parse the JSON response with a CLI tool such as jq. Because the endpoint follows an OpenAI-compatible chat-completions pattern, shell scripts that already use that style of request usually need only small changes.

What command-line jobs are best for a Bash plus RAG API workflow?

Evan Weber, Digital Marketing Expert, said, u0022I just discovered CustomGPT, and I am absolutely blown away by its capabilities and affordability! This powerful platform allows you to create custom GPT-4 chatbots using your own content, transforming customer service, engagement, and operational efficiency.u0022 The best fits for Bash plus RAG are repetitive text-heavy jobs where answers should come from your own files rather than model memory. Common examples include policy lookups, document or log summaries, command help, ticket triage, and translation. Shell works well as the wrapper because it already automates commands and file handling, while the RAG API adds grounded text generation, analysis, and language translation.

Why is my Bash AI assistant inconsistent on the same question?

Dan Mowinski, AI Consultant, said, u0022The tool I recommended was something I learned through 100 school and used at my job about two and a half years ago. It was CustomGPT.ai! That’s experience. It’s not just knowing what’s new. It’s remembering what works.u0022 In a Bash assistant, inconsistent answers usually point to retrieval quality rather than the shell itself. Start by checking whether the right files were ingested and whether the source documents are current. Then test whether small wording changes in the prompt pull different passages. Citation support is useful here because it lets you inspect which source was retrieved, so you can tell whether the issue is document coverage, chunking, or vague phrasing.

Where should the knowledge base live for a Bash RAG tool?

Barry Barresi, Social Impact Consultant, described his setup this way: u0022Powered by my custom-built Theory of Change AIM GPT agent on the CustomGPT.ai platform. Rapidly Develop a Credible Theory of Change with AI-Augmented Collaboration.u0022 For a Bash RAG tool, keep the knowledge base outside the shell script and ingest it into the retrieval system. Let the script stay thin and stateless while your source of truth lives in managed content such as websites, documents, JSON, CSV, audio, video, or URLs. That design is easier to maintain, easier to update, and much safer than hardcoding knowledge into Bash variables or local script files.

Can the same assistant power both a terminal tool and a website widget?

Andy Murphy, Owner, Integrity Data Insights LLC, said, u0022The simplicity of setting this up was impressive. Within a few minutes, they had a working chat bot. It can be seamlessly embedded into another website for very easy integration. This could instantly add value to a business. I will definitely be trying this out.u0022 You can use one API-backed assistant across both interfaces because the documented deployment options include API, embed widget, live chat, and search bar. In practice, that means a shell script and a web interface can call the same OpenAI-compatible /v1/chat/completions endpoint while sharing the same underlying knowledge sources.

How should I secure a Bash integration that queries private company documents?

Secure a Bash integration in layers. Use the documented API key authentication instead of embedding sensitive content directly in the script, limit the assistant to the knowledge sources it actually needs, and review citation-backed answers when the workflow touches private documents. The documented security and compliance credentials include SOC 2 Type 2 certification, GDPR compliance, and a statement that customer data is not used for model training. That makes the setup better suited to HR, legal, and internal policy workflows than storing sensitive answers inside hardcoded shell logic.

Related Resources

If you’re exploring how shell workflows connect to AI systems, this guide adds useful context.

- Enterprise RAG API — Learn how the CustomGPT.ai API supports enterprise-grade retrieval-augmented generation for scalable, production-ready integrations.