TL;DR

- Choose the right RAG vector database (Pinecone, Weaviate, or ChromaDB) based on scalability, ease of integration, and developer features.

- Pinecone excels for production RAG systems needing guaranteed performance and minimal ops overhead, but costs 3-5x more than alternatives.

- Weaviate offers the best balance of features and flexibility for complex RAG applications with its hybrid search and graph capabilities.

- ChromaDB dominates for prototyping and smaller deployments with its zero-config approach. Choose based on scale, budget, and operational complexity rather than raw performance—all three handle RAG workloads effectively.

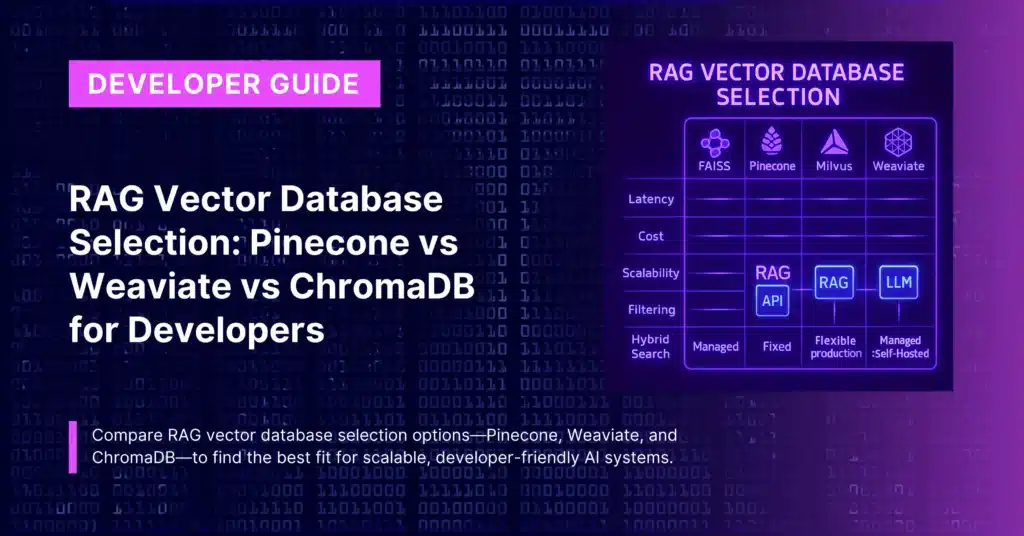

When building Retrieval-Augmented Generation (RAG) systems, your vector database choice fundamentally determines performance, cost, and operational complexity.

Unlike traditional databases focused on exact matches, vector databases power semantic search by storing high-dimensional embeddings that capture meaning, enabling RAG systems to find contextually relevant information rather than simple keyword matches.

The three most popular choices—Pinecone, Weaviate, and ChromaDB—each excel in different scenarios. This technical comparison provides the data-driven insights you need to make the right architectural decision for your RAG implementation.

Performance Benchmarks and Architecture Analysis

Pinecone: Serverless Performance at Premium Cost

Pinecone’s serverless architecture automatically handles sharding, replication, and load balancing through their proprietary indexing algorithm that combines graph-based and tree-based approaches, achieving O(log n) complexity for both inserts and queries.

Performance Characteristics:

- Query latency: <50ms for most RAG workloads

- Throughput: 10,000+ QPS on standard pods

- Scalability: Auto-scaling with zero configuration

- Index build time: ~2-5 minutes for 1M vectors

Technical Strengths:

- Pod-based isolation prevents noisy neighbor issues

- Built-in replication across availability zones

- Real-time updates with immediate consistency

- Multi-region deployment options

Cost Analysis (1M vectors, 1536 dimensions):

- Starter pod (p1.x1): ~$70/month

- Performance pod (s1.x1): ~$140/month

- High-memory pod (p2.x1): ~$280/month

- Plus additional costs for queries and storage

Weaviate: Hybrid Search with Graph Intelligence

Weaviate’s modular architecture supports pluggable vectorizers, rerankers, and storage backends. Its hybrid search capabilities combine dense vectors with sparse BM25 scoring, enabling both semantic and keyword search in a single query.

Performance Characteristics:

- Query latency: 20-100ms depending on complexity

- Throughput: 5,000+ QPS with optimized configuration

- Hybrid search: Native BM25 + vector combination

- Multi-modal: Text, images, and audio in unified schema

Technical Strengths:

- GraphQL interface with powerful filtering

- Native support for auto-vectorization modules

- Knowledge graph capabilities with object relationships

- Extensive metadata filtering and faceted search

Cost Analysis (1M vectors, managed cloud):

- Sandbox: Free up to 1M vectors

- Standard: ~$25-100/month depending on traffic

- Enterprise: Custom pricing with dedicated clusters

ChromaDB: Developer-First Simplicity

ChromaDB’s embedded architecture runs alongside your application, eliminating network latency for local development. Its segment-based storage engine optimizes for write performance, making it ideal for frequently updated datasets.

Performance Characteristics:

- Query latency: 5-50ms (embedded mode)

- Throughput: 2,000+ QPS for typical deployments

- Memory footprint: Minimal when embedded

- Startup time: Instant for embedded, seconds for server mode

Technical Strengths:

- Zero configuration required for getting started

- Pythonic API with intuitive data handling

- Built-in persistence with SQLite backend

- Automatic embedding generation with multiple providers

Cost Analysis:

- Self-hosted: Infrastructure costs only (~$20-50/month)

- Cloud (coming soon): Expected competitive pricing

- Development: Completely free for local use

Feature Comparison Matrix

| Feature | Pinecone | Weaviate | ChromaDB |

| Deployment | Managed only | Self-hosted + managed | Self-hosted + embedded |

| Hybrid Search | API layer | Native | Limited |

| Multi-tenancy | Namespaces | Collections + tenants | Collections |

| Metadata Filtering | Basic | Advanced GraphQL | Python-native |

| Auto-vectorization | No | Yes (modules) | Yes (built-in) |

| Real-time Updates | Yes | Yes | Yes |

| Backup/Recovery | Automatic | Manual setup | File-based |

| Monitoring | Built-in dashboard | Prometheus metrics | Basic logging |

RAG-Specific Implementation Guidance

Migration and Scaling Strategies

Starting Small and Scaling Up

Recommended Path:

- Prototype with ChromaDB to validate RAG approach and iterate quickly

- Evaluate with representative data using all three platforms

- Move to Weaviate or Pinecone based on production requirements

Multi-Database Strategies

Some organizations use multiple vector databases for different workloads:

- ChromaDB for development and rapid prototyping

- Weaviate for complex search features and hybrid queries

- Pinecone for customer-facing applications requiring guaranteed performance

Data Portability Considerations

Vector databases differ in export capabilities:

- ChromaDB: Full SQLite export with embeddings and metadata

- Weaviate: GraphQL-based export requires custom tooling

- Pinecone: Limited export options, vendor lock-in concerns

Cost Optimization Strategies

Pinecone Cost Managemen

- Use starter pods for development and testing

- Implement query batching to reduce API calls

- Enable query filtering to reduce computational overhead

- Monitor pod utilization and right-size instances

Weaviate Optimization

- Self-host for predictable costs at scale

- Use compression techniques like binary quantization

- Optimize shard configuration for your query patterns

- Leverage caching for frequently accessed data

ChromaDB Efficiency

- Embedded mode eliminates hosting costs

- Batch operations improve throughput

- Memory management prevents resource waste

- Selective indexing reduces storage requirements

Production Implementation Checklist

Security and Compliance

- Network isolation: VPC peering (Pinecone, Weaviate) vs local access (ChromaDB)

- Data encryption: At rest and in transit across all platforms

- Access control: API keys (Pinecone), RBAC (Weaviate), application-level (ChromaDB)

- Audit logging: Built-in (Pinecone), configurable (Weaviate), application-level (ChromaDB)

Monitoring and Observability

- Query performance: All platforms provide latency metrics

- Resource utilization: CPU, memory, and storage monitoring

- Error tracking: Failed queries and system errors

- Cost tracking: Usage-based billing requires careful monitoring

High Availability and Disaster Recovery

- Pinecone: Built-in replication and automated backups

- Weaviate: Manual cluster setup and backup procedures

- ChromaDB: File-based backups and application-level replication

Integration with RAG Frameworks

All three vector databases integrate well with popular RAG frameworks:

LangChain Support:

- All platforms have native LangChain integration

- Similar API patterns across all three

- Built-in support for common RAG patterns

LlamaIndex Compatibility:

- Full support for all three databases

- Optimized connectors for each platform

- Advanced retrieval strategies available

CustomGPT Integration: For teams wanting to avoid vector database complexity entirely, CustomGPT’s RAG API provides enterprise-grade retrieval without managing vector infrastructure. Their developer starter kit demonstrates complete RAG implementation with voice features and multiple deployment options.

For more RAG API related information:

- CustomGPT.ai’s open-source UI starter kit (custom chat screens, embeddable chat window and floating chatbot on website) with 9 social AI integration bots and its related setup tutorials.

- Find our API sample usage code snippets here.

- Our RAG API’s Postman hosted collection – test the APIs on postman with just 1 click.

- Our Developer API documentation.

- API explainer videos on YouTube and a dev focused playlist.

- Join our bi-weekly developer office hours and our past recordings of the Dev Office Hours.

P.s – Our API endpoints are OpenAI compatible, just replace the API key and endpoint and any OpenAI compatible project works with your RAG data. Find more here.

Wanna try to do something with our Hosted MCPs? Check out the docs for the same?

Frequently Asked Questions

Which vector database is best for RAG when answer accuracy matters more than raw speed?

Michael Juul Rugaard of The Tokenizer said, u0022Based on our huge database, which we have built up over the past three years, and in close cooperation with CustomGPT, we have launched this amazing regulatory service, which both law firms and a wide range of industry professionals in our space will benefit greatly from.u0022 For RAG systems where users ask terminology-sensitive or regulation-heavy questions, Weaviate is usually the strongest choice because native hybrid BM25 plus vector search and metadata filtering help retrieve exact terms and semantic matches together. Pinecone is a better fit when predictable performance and minimal ops matter more than advanced retrieval logic. ChromaDB works best for smaller corpora and simpler retrieval needs.

How should I choose between Pinecone, Weaviate, and ChromaDB for a production RAG system?

Nitro! Bootcamp launched 60 AI chatbots in 90 minutes for 30+ minority-owned small businesses with a 100% success rate. That kind of rollout shows why operational simplicity matters. Choose Pinecone when you need guaranteed performance and minimal database operations in production. Choose Weaviate when answer quality depends on hybrid search, strong filtering, or graph-like relationships. Choose ChromaDB when you want the fastest path from prototype to a smaller deployment and can accept fewer advanced retrieval features.

Is Weaviate better than ChromaDB for hybrid search in RAG?

Usually yes. Weaviate is the better choice when users mix exact keywords, names, dates, and semantic questions in the same query because it combines BM25 keyword search with vector search and supports richer metadata filtering. ChromaDB is stronger when you want a lightweight, zero-config store for mostly semantic retrieval and local development.

When should developers pick Pinecone instead of Weaviate for RAG?

Pick Pinecone when you want guaranteed performance, auto-scaling, and as little operational overhead as possible. Pick Weaviate when retrieval quality depends on hybrid search, flexible filtering, or object relationships inside the datastore. Cost can be the tiebreaker: Pinecone is the premium-priced option and can cost 3-5x more than alternatives, so it makes the most sense when simplicity at scale is worth the extra spend.

Can ChromaDB handle real RAG workloads, or is it only for prototypes?

ChromaDB is not only for prototypes. All three options can handle RAG workloads effectively, but ChromaDB is best when you want zero-config setup, local development, and smaller deployments. Dr. Michael Levin said, u0022Omg finally, I can retire! A high-school student made this chat-bot trained on our papers and presentationsu0022. That kind of outcome shows that useful RAG systems can start with a focused corpus and a simple stack before a team needs the extra scale or retrieval features of Pinecone or Weaviate.

Priyansh is Developer Relations Advocate who loves technology, writer about them, creates deeply researched content about them.