Experts from Massachusetts Institute of Technology (MIT), CustomGPT.ai, and AI answer accuracy evaluator Tonic.ai will discuss topics highly relevant to any business hoping to leverage the benefits of generative AI in this upcoming webinar. Read on to learn why your attendance could be vital to ensure AI answer accuracy and prevent damaging hallucinations.

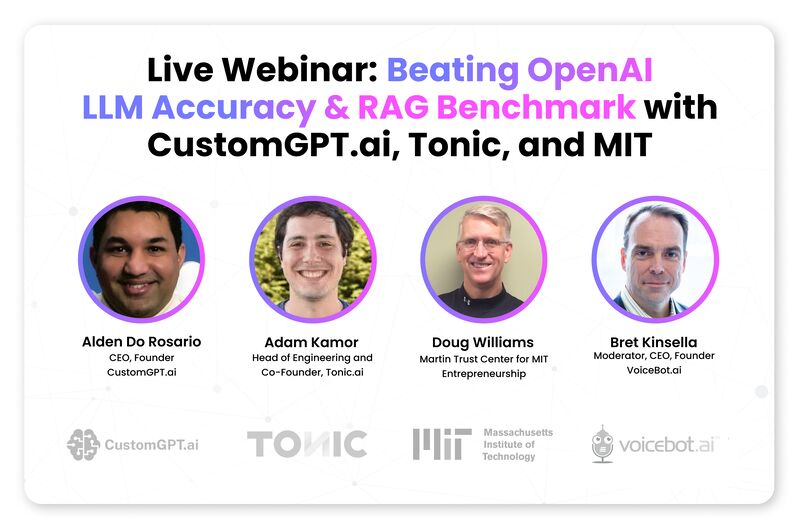

On April 10th, Alden Do Rosario – CEO and Founder of CustomGPT.ai, Adam Kamor – Head of Engineering and Co-Founder of Tonic.ai and Doug Williams of the Martin Trust Center for MIT Entrepreneurship will discuss how AI hallucinations can erode trust, mislead customers and compromise business integrity. The free webinar will be organized and monitored by Bret Kinsella, CEO and Founder of VoiceBot.ai and center on:

– Measuring answer accuracy

– Dealing with hallucinations

– Importance of guardrails

– Real world case studies

Why AI Answer Accuracy is Critical?

If you’re using generative AI to serve your customers it’s critical that this interface, often a chatbot, delivers error free answers and insights. If it doesn’t, you risk impacting your brand’s reputation and reliability and even your bottom line profits.

Earlier this year, Air Canada lost a small claims court case after a grieving passenger said they were misled by a chatbot on the airline’s rules around bereavement fares. The chatbot “hallucinated,” providing an answer that wasn’t inline with airline policy that was actually delivered coupled with a link to the correct policy page. The passenger was awarded $812.02 in damages and court fees. The impact to Air Canada’s reputation, with such a topical issue going viral globally, is of course far more severe than that financial penalty.

Air Canada isn’t alone amongst big brands suffering for their chatbots errors. In January it emerged that parcel firm DPD’s “DPD Chat” had devolved into swearing, rudimentary jokes and producing a poem “about a useless chatbot for a parcel delivery firm.” In December 2023, A Chevrolet dealership’s AI chatbot also “went rogue” offering to sell a 2024 Chevrolet Tahoe for $1 and adding “That’s a legally binding offer—no takesie backsies.”

RAG Technology as the Answer to Accuracy

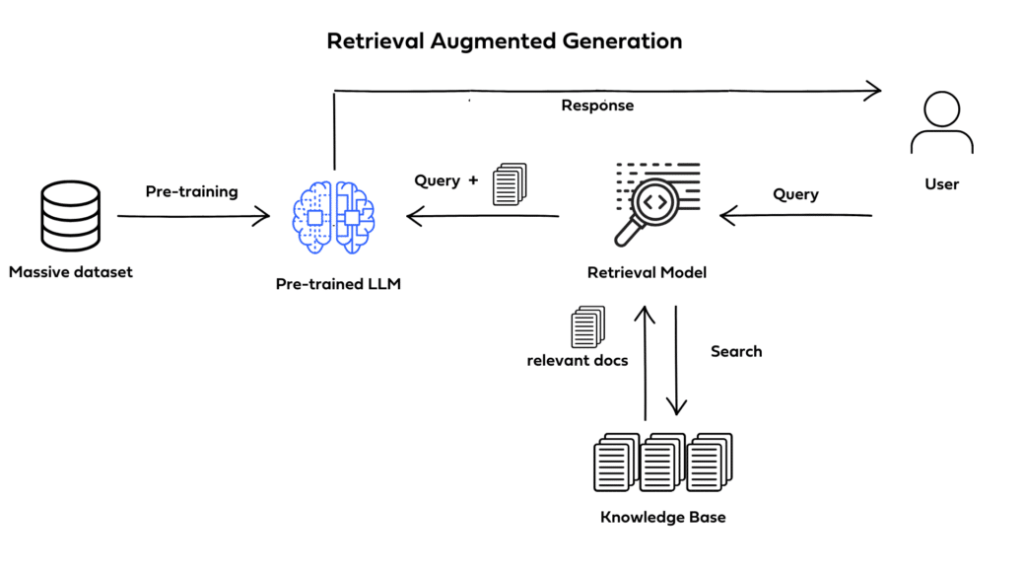

Retrieval Augmented Generation (RAG) technology addresses some of the limitations of using foundational large language models (LLMs) such as OpenAI’s ChatGPT in creating chatbots for business. LLMs on their own can rely purely on the data they were trained on to deliver answers.

RAG allows chatbot builders to leverage the strengths of generative AI models but also to only use external, or specific, knowledge sources such as their own company data. The technology enables generative AI chatbots to deliver more precise and contextually relevant answers. These bots can often be configured either to use company data in conjunction with pre-trained foundational databases or to only use company data, adding important guardrails to AI’s answers.

RAG can reduce the likelihood of a chatbot hallucinating or generating false information. CustomGPT.ai’s collaboration with the Martin Trust Center for MIT Entrepreneurship to produce the center’s ChatMTC is an informative illustration of an organization’s concerns and RAG as a solution.

CustomGPT.ai Outperforms OpenAI for Answer Accuracy in Tonic.ai’s RAG Benchmark

CustomGPT.ai came out ahead of OpenAI’s Assistants, Cohere, Google Vertex and Amazon Titan in Tonic.ai’s latest RAG evaluations, setting new standards for AI answer accuracy. The benchmark from Tonic.ai measures answer accuracy, assessing systems on their ability to retrieve and generate accurate, quality answers from an established set of documents.

“CustomGPT.ai is the clear winner in this RAG face-off,” says Adam Kamor, PhD.

Adam Kamor, as well as Doug Williams from MIT, join CustomGPT.ai’s Alden Do Rosario in next Wednesday’s (April 10th, 2024) webinar:

Register with Zoom for Beating OpenAI – LLM Accuracy & RAG Benchmark with CustomGPT.ai, Tonic and MIT

The experts will delve into the importance of answer accuracy and why benchmarks matter. Attendees can discover:

- Insights on Accuracy: Hear from Alden DoRosario, Founder, CEO of CustomGPT.ai as he discusses the recent benchmark and why answer quality is critical for businesses.

- In-depth Benchmark Insights: Learn about Tonic.ai’s rigorous benchmark methodology and approach for measuring accuracy with Adam Kamor, Co-Founder, Head of Engineering of Tonic.ai.

- Real-world Impact: Listen as MIT’s Doug Williams discusses how MIT leveraged CustomGPT.ai and selected it because of its highly accurate responses.

You can also join the pre-event discussion and meet the experts and attendees on LinkedIn.

Alternatively, discover CustomGPT.ai’s zero-code custom GPT chatbot solution.

Frequently Asked Questions

Will an AI agent answer beyond the material I upload?

It can be limited to approved material if you use retrieval-augmented generation and scope the assistant to company sources. The supplied webinar content explains that RAG can be configured to use only company data, which adds guardrails and reduces the likelihood of hallucinations. Joe Aldeguer, IT Director at Society of American Florists, underscored the value of precise source control: u0022CustomGPT.ai knowledge source API is specific enough that nothing off-the-shelf comes close. So I built it myself. Kudos to the CustomGPT.ai team for building a platform with the API depth to make this integration possible.u0022

How does retrieval-augmented generation RAG help reduce hallucinations in large language models?

RAG reduces hallucinations by retrieving approved external knowledge, such as company documents, before generating a reply instead of relying only on a model’s training data. The supplied webinar content says this makes answers more precise and contextually relevant and can reduce the likelihood of hallucinations. A provided RAG accuracy benchmark also states that CustomGPT.ai outperformed OpenAI, reinforcing the value of source-grounded retrieval for business use.

Which AI gives the most accurate answers for business questions?

There is no single AI that is most accurate for every business question. For company-specific policies, products, and procedures, a RAG-based assistant grounded in your own documents is usually more accurate than a general model such as ChatGPT working from pretraining alone. Stephanie Warlick, Business Consultant, described the practical benefit this way: u0022Check out CustomGPT.ai where you can dump all your knowledge to automate proposals, customer inquiries and the knowledge base that exists in your head so your team can execute without you.u0022

How should businesses measure AI answer accuracy before launch?

Measure accuracy separately from speed or user satisfaction. Bill French, Technology Strategist, said, u0022They’ve officially cracked the sub-second barrier, a breakthrough that fundamentally changes the user experience from merely ‘interactive’ to ‘instantaneous’.u0022 Fast responses improve UX, but launch testing should still verify that answers match approved sources, check for hallucinations, and confirm that guardrails work on out-of-scope questions. Those are the same risk areas highlighted in the April 10 discussion on answer accuracy, hallucinations, and guardrails.

Why are guardrails important even when an AI model seems accurate?

Guardrails matter because a fluent answer can still be wrong and costly. Air Canada lost a small claims case after a chatbot hallucinated its bereavement fare policy, leading to $812.02 in damages and court fees. Other public failures included DPD’s chatbot swearing and a Chevrolet dealership bot offering a 2024 Chevrolet Tahoe for $1. Guardrails help keep answers tied to approved policies instead of improvising.

Can businesses keep AI answers accurate without using company data to train the model?

Yes. You can ground answers in company data without using that data to train the underlying model. The supplied compliance information states that customer data is not used for model training and that the system is GDPR compliant. In practice, RAG answers from approved external knowledge sources at response time, which helps keep private information scoped and responses tied to current documents.

Related Resources

This guide expands on one of the most important drivers of trustworthy AI answers.

- Anti-Hallucination Guide — Learn how CustomGPT.ai helps reduce inaccurate or fabricated responses with practical anti-hallucination techniques.