In this series of articles on the CustomGPT.ai SDK, we’ve explored various aspects of seamlessly integrating custom chatbots into applications. Today, we will perform another operation: streaming responses with the CustomGPT.ai RAG API and SDK. Streaming offer a dynamic way to interact with chatbots, enabling real-time conversations that evolve as new information is processed. In this article, we’ll explore how developers can leverage the CustomGPT.ai API and SDK to implement streaming, providing possibilities for more engaging and interactive user experiences.

Before starting the streaming response with CustomGPT.ai RAG API and SDK let’s understand what is streaming technique is.

What is Streaming?

Streaming is a technique used to transmit data continuously in a steady flow, allowing the recipient to start processing and using the data as soon as it’s received, rather than waiting for the entire dataset to be transmitted. It involves sending data in small, manageable chunks, which can be processed incrementally. Streaming is commonly used in various applications such as video and audio streaming, real-time messaging, and data processing, enabling efficient use of resources and facilitating real-time interactions.

Modes of Streaming/Data Retrieval

Streaming false and streaming true typically refer to different modes of data retrieval.

Streaming False

This mode indicates that data will be fetched in its entirety before being delivered to the client. In other words, the entire response is sent at once after all processing is complete.

Streaming True

This mode indicates that data will be sent in a continuous stream, allowing the client to start processing it as soon as the first chunk of data is received. This enables real-time interaction and can be particularly useful for large datasets or when immediate processing of data is required.

Streaming with CustomGPT.ai

Streaming with CustomGPT.ai involves receiving text responses in real time as they are generated by the AI model. This enables a continuous flow of output without waiting for the entire response to be generated before it is delivered to the client. By utilizing streaming, developers can interact with the CustomGPT.ai model dynamically, allowing for more efficient processing of data and faster response times. This can be particularly beneficial for applications requiring immediate feedback or handling large volumes of data in real time.

Streaming Response with CustomGPT.ai RAG API: A Practical Example

Sending messages with streaming via the CustomGPT.ai RAG API endpoint involves interacting with the chatbot to receive responses in real-time. This is how you can do it:

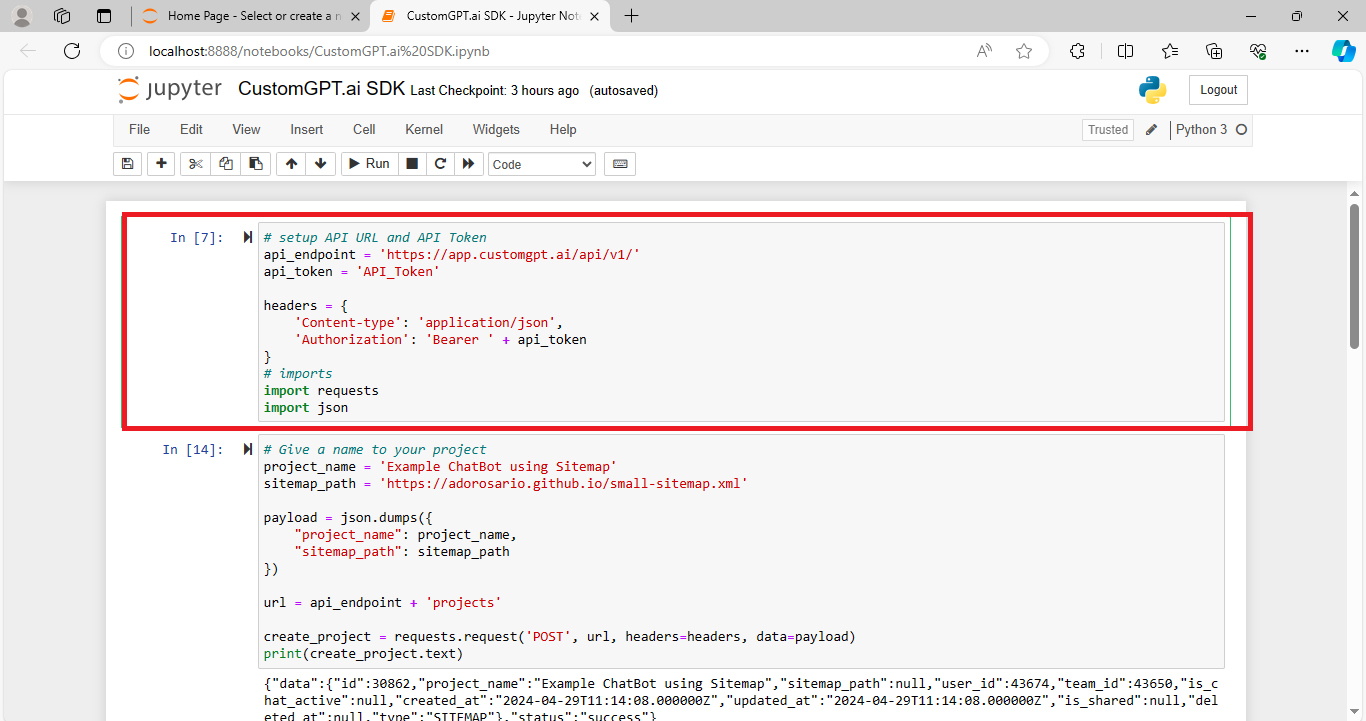

Setting up RAG API URL and Token

The RAG API endpoint and authentication token are configured to access the CustomGPT.ai RAG API.

Read the full blog on How to get your RAG API key.

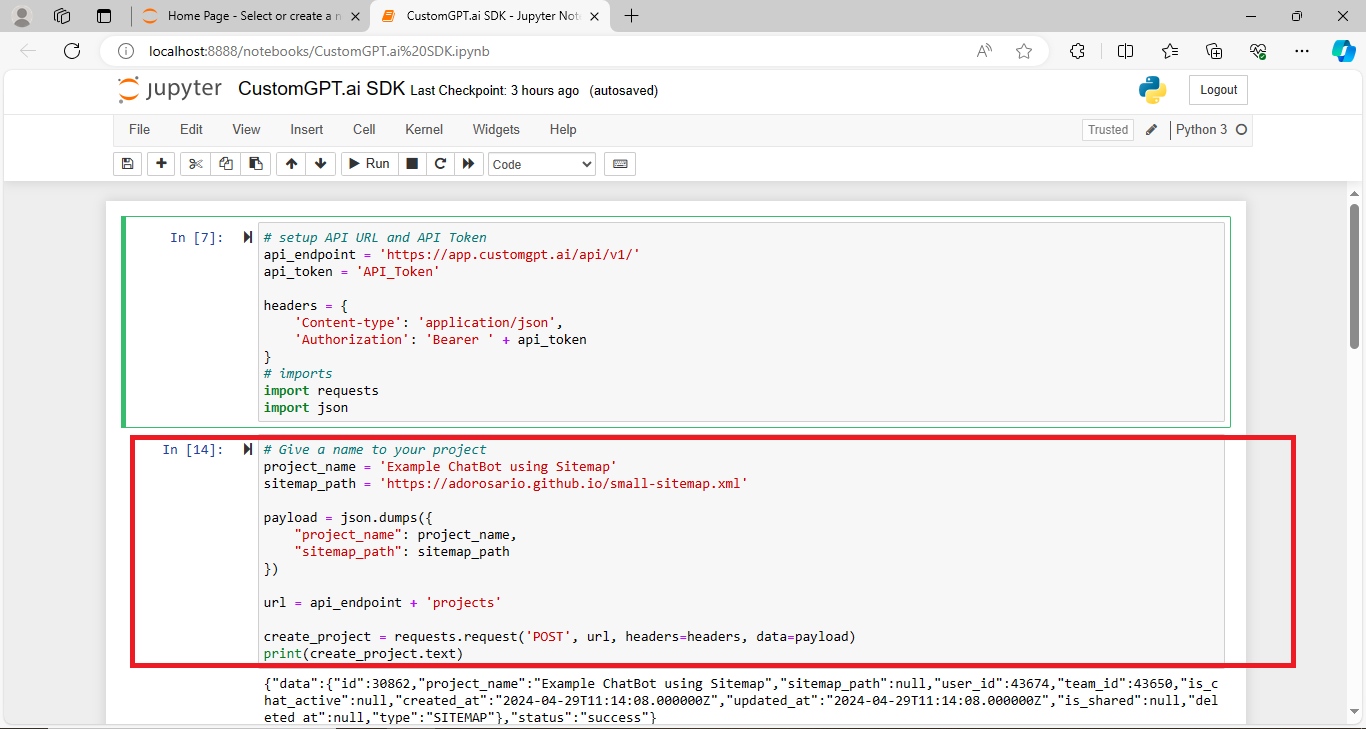

Creating a Project

A project is created with a specific name and sitemap path, which defines the structure of the chatbot’s knowledge base.

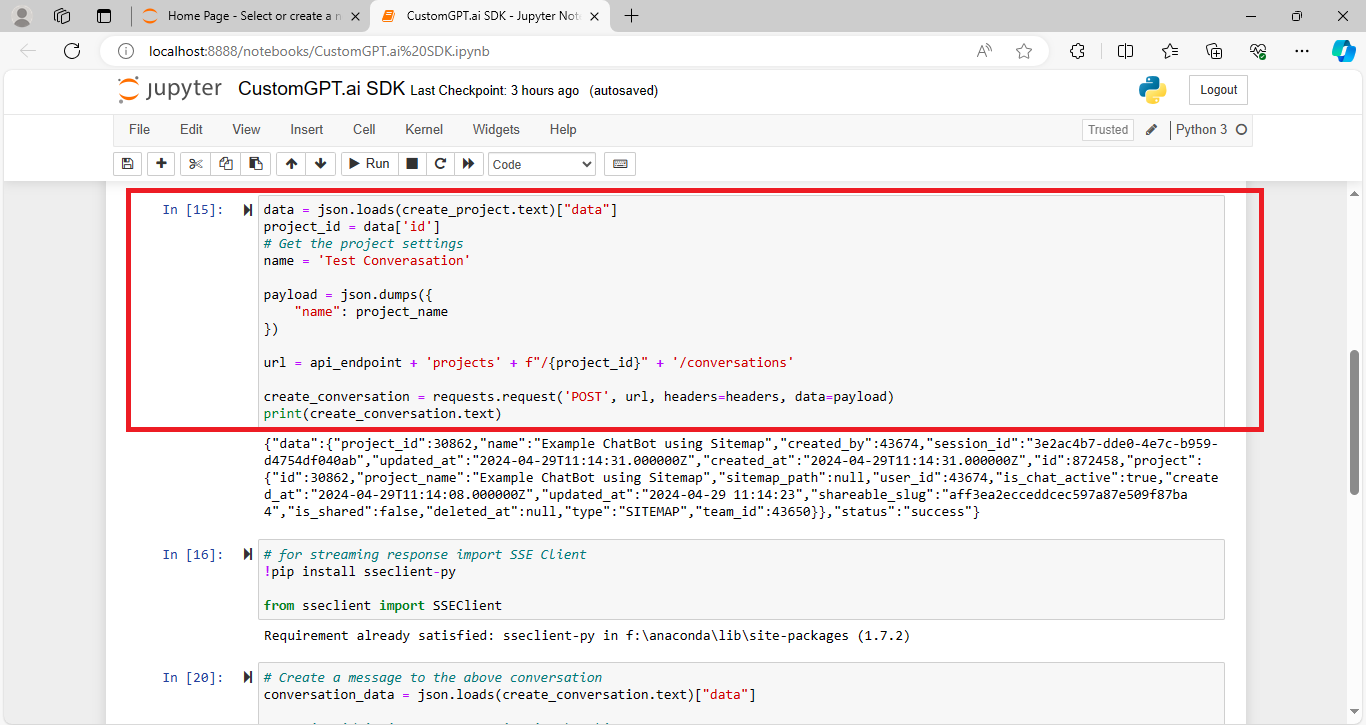

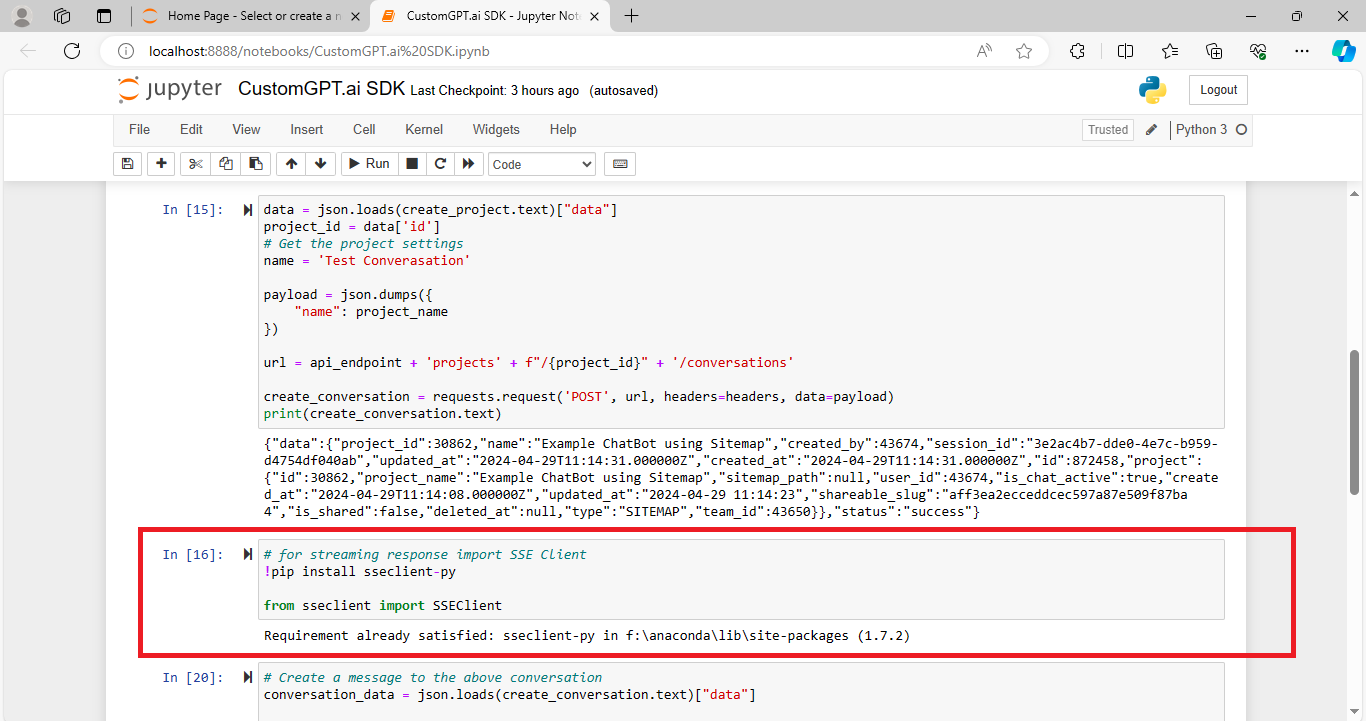

Creating Project Conversation

A conversation is initiated within the project, allowing interactions with the chatbot.

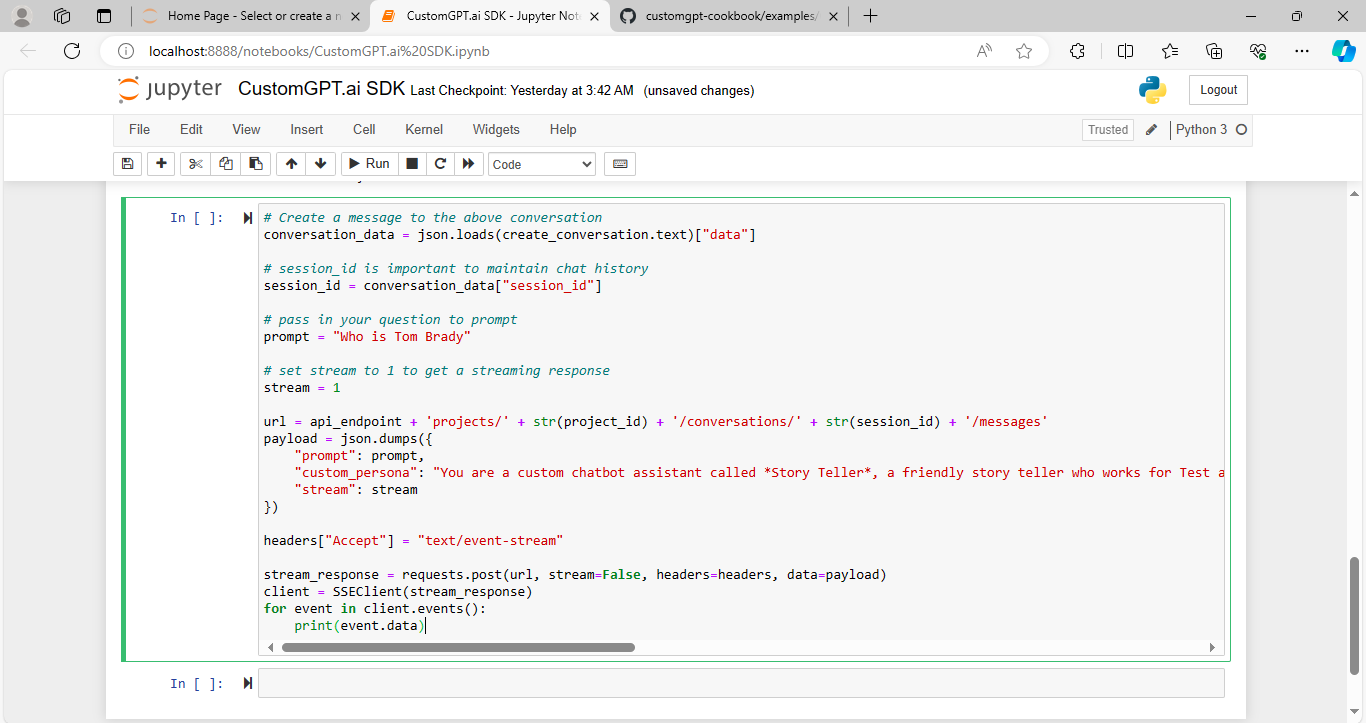

Sending Message to Conversation with Stream True

The SSEClient library is imported to handle streaming responses.

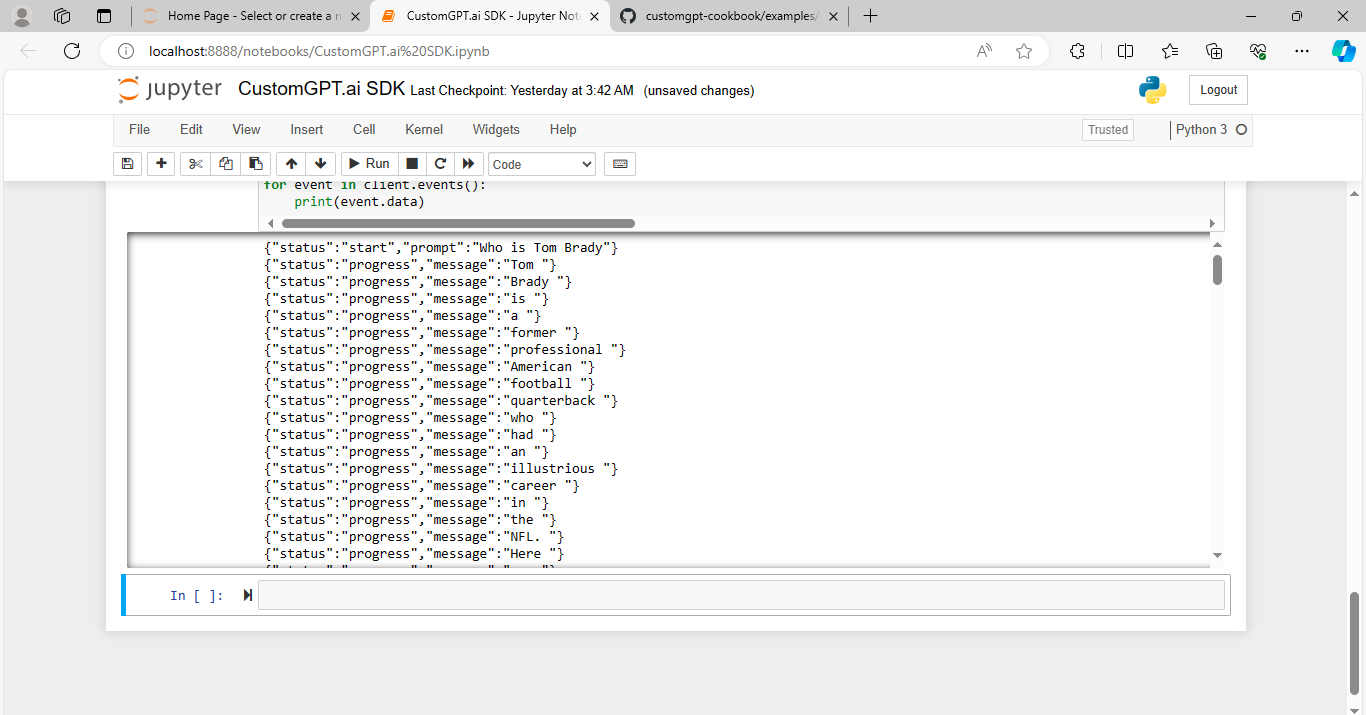

A message is sent to the conversation with streaming set to true, ensuring real-time responses. The response is streamed, and each event data is printed as it is received.

Now we will run the code and see how the response is printed.

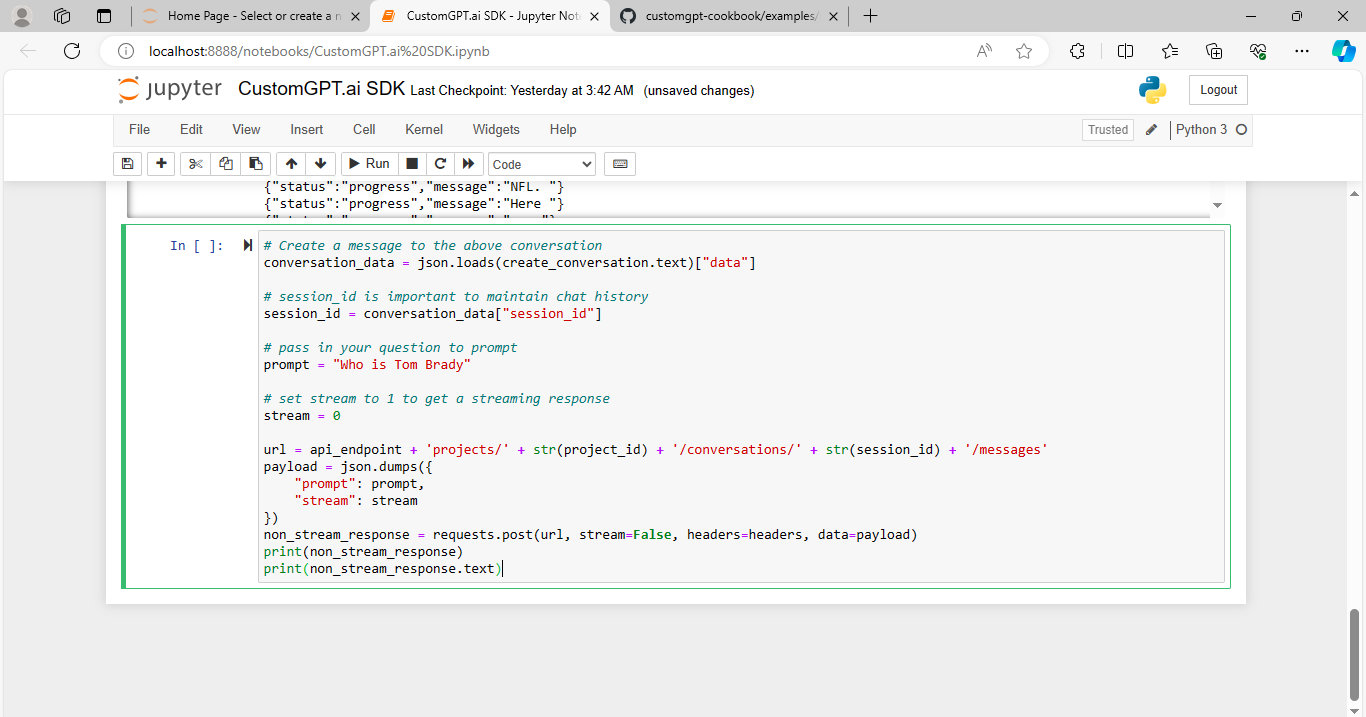

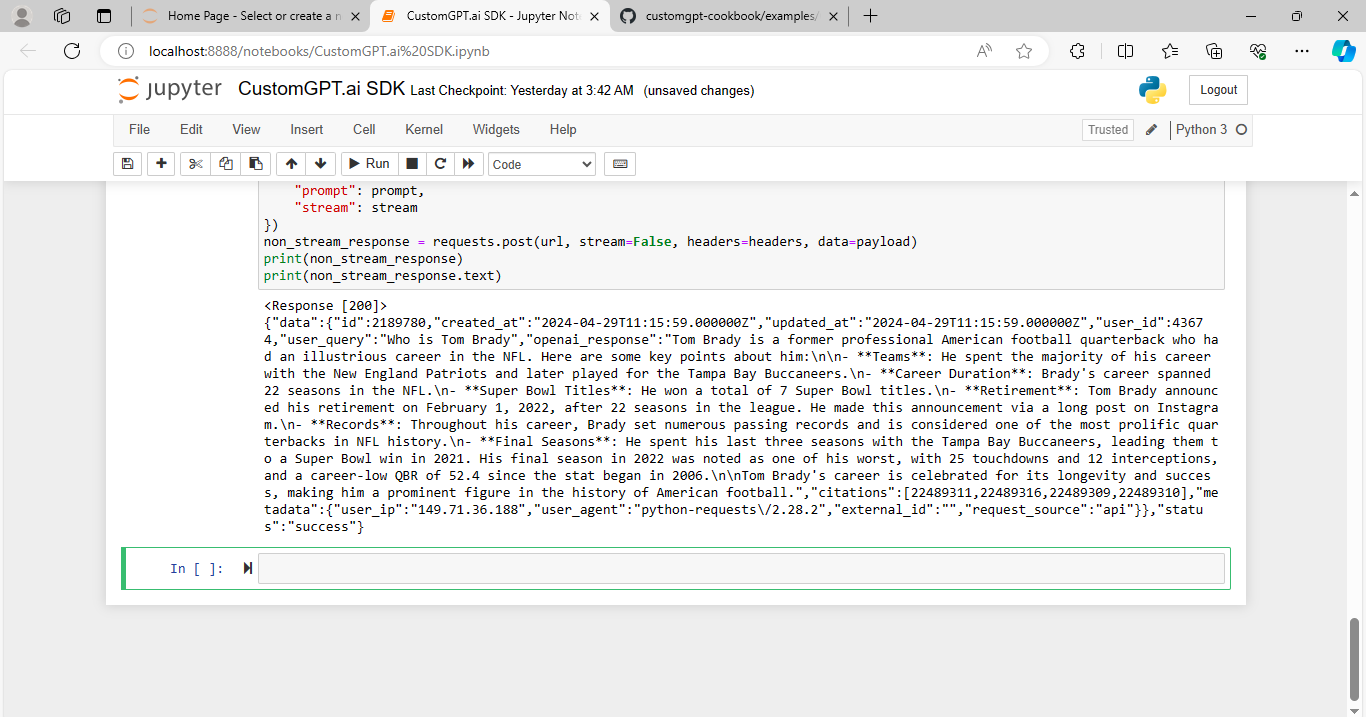

Sending Message to Conversation with Stream False

Another message is sent to the conversation, but this time with streaming set to false.

The non-stream response is received and printed.

This process demonstrates how developers can send messages to the CustomGPT.ai chatbot and receive responses with both streaming true and false options, allowing for dynamic interaction and real-time feedback.

Streaming Response with CustomGPT.ai SDK: A Practical Example

In this example, we’ll demonstrate how to send streaming messages to the CustomGPT.ai chatbot using the CustomGPT SDK. Let’s dive into the SDK code snippets:

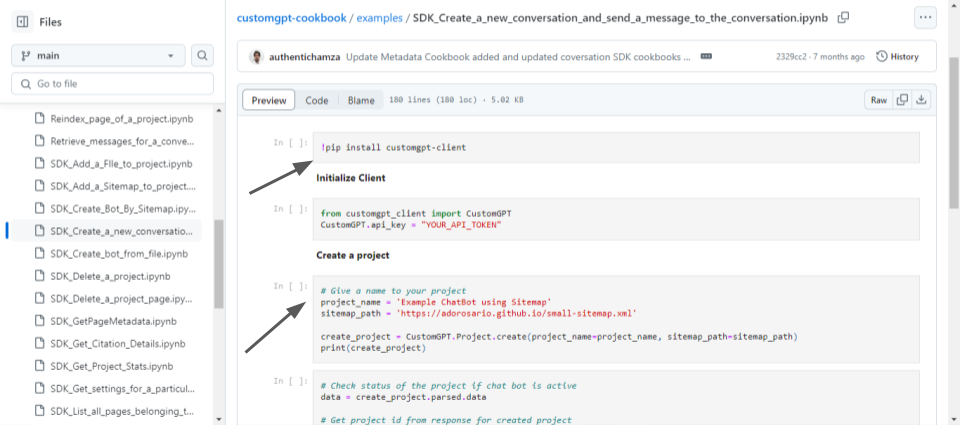

Installing CustomGPT.ai SDK

The CustomGPT.ai SDK is installed using the command !pip install customgpt.ai-client.

Initializing Client

The CustomGPT.ai client is initialized with your RAG API token to authenticate RAG API requests.

Creating a Project

A project named ‘Example ChatBot using Sitemap’ is created with a provided sitemap path. The status of the project and chatbot activation status is checked to ensure successful creation as shown in the above image.

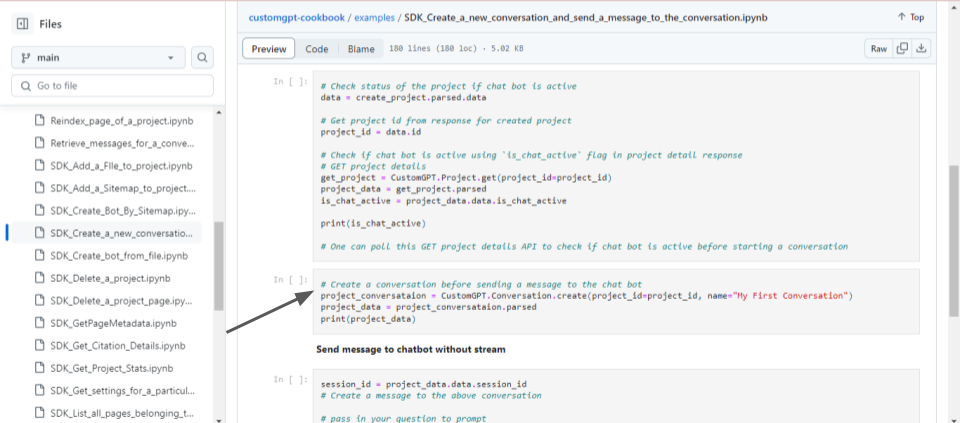

Creating Conversation

A conversation named ‘My First Conversation’ is created within the project.

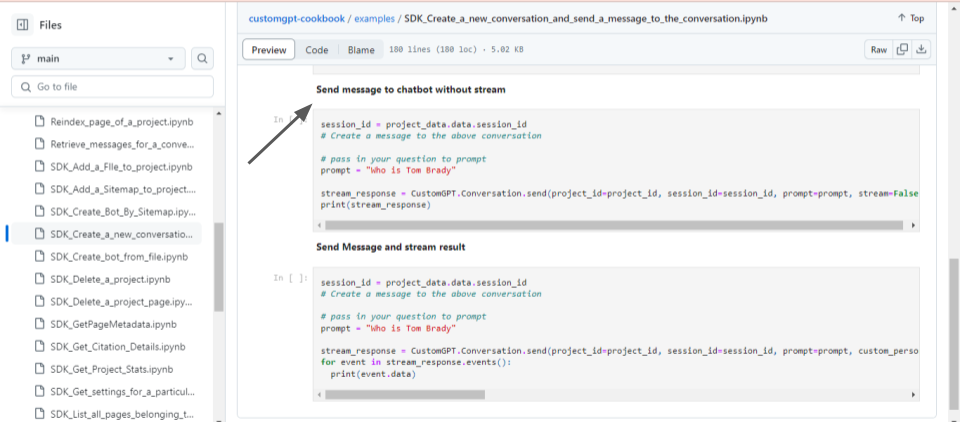

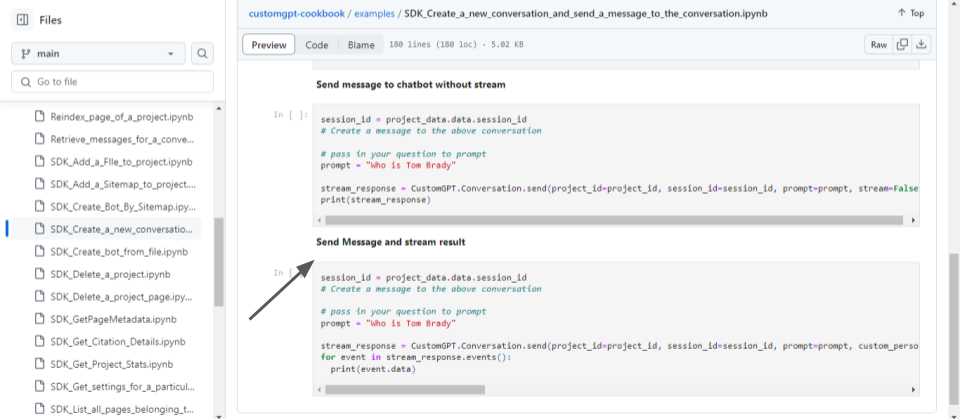

Sending Message to Chatbot without Stream

A message prompt, “Who is Tom Brady,” is sent to the chatbot without streaming.

The response from the chatbot is printed.

Sending Message and Streaming Results

Another message prompt, “Who is Tom Brady,” is sent to the chatbot with streaming enabled.

With the CustomGPT.ai SDK, you don’t need to define every detail or write extensive code to send messages, unlike using the RAG API directly. Instead, you can simply install the CustomGPT.ai SDK using the provided command, as shown in this example. Once installed, you can call and perform functions effortlessly by executing commands and leveraging the functionality provided by the SDK. This streamlined approach simplifies the process of integrating chatbot functionality into your applications, allowing you to focus on building and enhancing your projects with ease.

Why to stream text with CustomGPT.ai?

Streaming responses with CustomGPT.ai offers several benefits:

- Users receive instant responses as they input queries, enhancing the conversational experience and reducing wait times.

- Streaming responses enable fluid and dynamic conversations, allowing chatbots to adapt and respond dynamically based on user inputs.

- Real-time responses keep users engaged and immersed in the conversation, leading to a more interactive and satisfying experience.

- Streaming capabilities seamlessly integrate with existing applications and workflows, making it easy for developers to incorporate chatbot functionality.

- By responding as they are generated, streaming minimizes latency and improves the efficiency of chatbot interactions.

- Streaming responses offer flexibility and scalability, allowing chatbots to handle a high volume of concurrent interactions without sacrificing performance.

Conclusion

In wrapping up, exploring streaming text with CustomGPT.ai showcases a potent resource for developers aiming to infuse their applications with real-time interactions. This method offers a streamlined approach to sending and receiving messages, ensuring efficiency and simplicity in the development process. By utilizing either the RAG API or the CustomGPT.ai SDK, developers can create more responsive and engaging chatbot experiences for users. This capability presents opportunities across various domains, from customer support to educational platforms. Developers can be positioned to deliver inventive and interactive solutions that elevate user engagement and satisfaction.

Frequently Asked Questions

How do you get started with streaming chatbot responses using CustomGPT.ai?

Start by integrating your chatbot with CustomGPT.ai’s RAG API or SDK, then enable streaming so responses are delivered incrementally instead of waiting for one full payload. In a streaming flow, your app should display incoming chunks as they arrive to create a real-time experience.

What is streaming in a chatbot API context?

Streaming is a delivery method where response data is sent continuously in small chunks, so your app can process and show output before the full response is finished. This supports more real-time conversational behavior.

Why does streaming make chatbot interactions feel more real-time?

Because users can see partial output as soon as data arrives, instead of waiting for an entire response to complete. That immediate feedback makes interactions feel faster and more dynamic during conversations.

Can you implement streaming with both the CustomGPT.ai API and SDK?

Yes. The material explicitly discusses streaming answers using both the CustomGPT.ai RAG API and the SDK, so teams can apply the same real-time response concept through either integration path.

When should you choose streaming for a chatbot integration?

Choose streaming when your priority is real-time interaction and progressive response delivery. If your use case benefits from showing answers as they are generated, streaming is a strong fit.

What core behavior should your frontend handle in a streaming chatbot UI?

Your frontend should handle incremental updates by rendering response chunks continuously as they arrive. This aligns the UI with streaming’s core behavior: processing data progressively rather than waiting for full completion.